Section 3

The Four Candidates

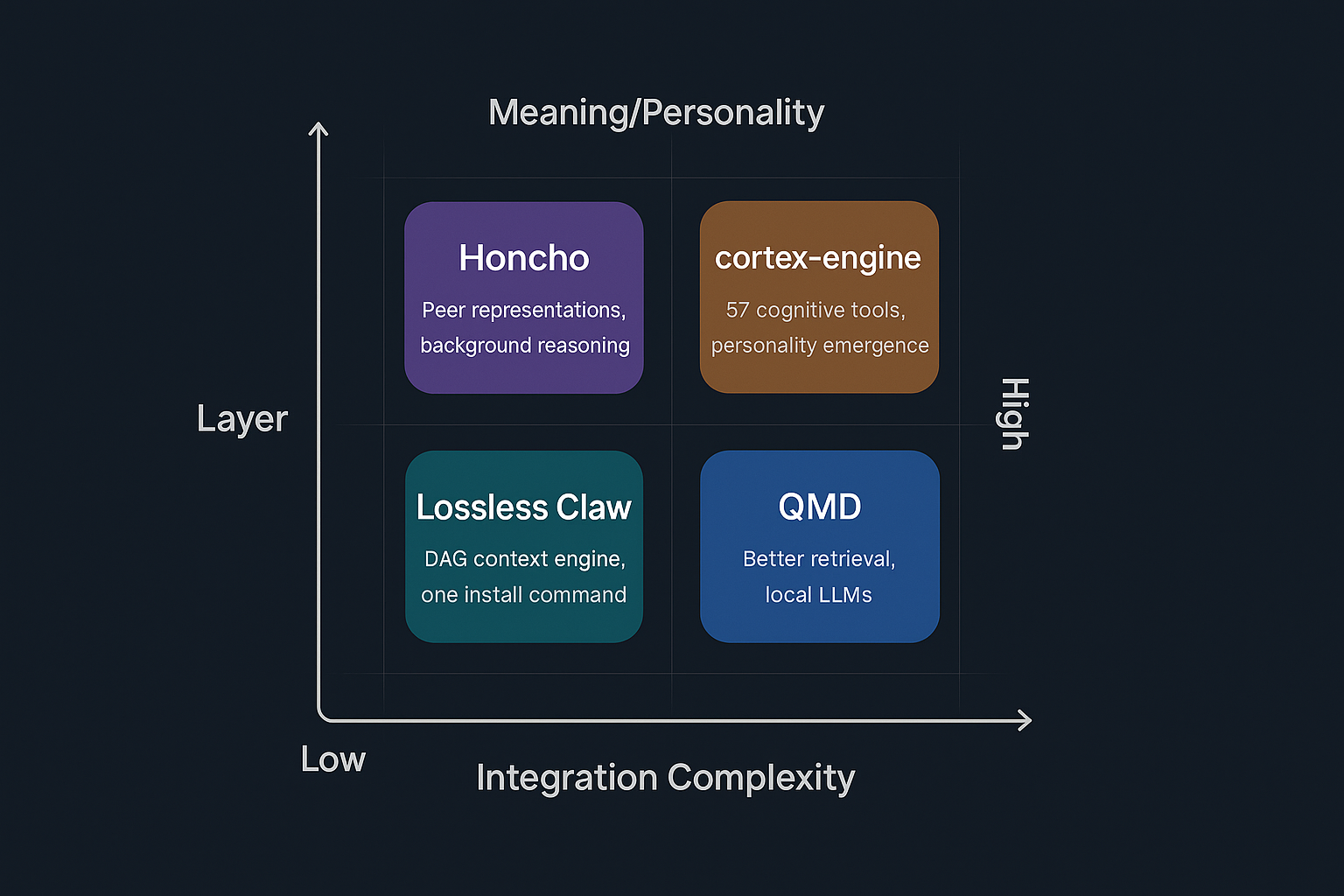

Four systems are worth knowing about. They sit at different positions on the infrastructure–meaning spectrum and vary widely in integration complexity.

Four systems mapped by memory layer and integration effort. Bottom = infrastructure. Top = meaning & personality.

Community plugin that replaces memory-core. Conversation-based memory using LanceDB — automatically extracts and stores facts, preferences, and decisions after each turn, and injects relevant context before every response.

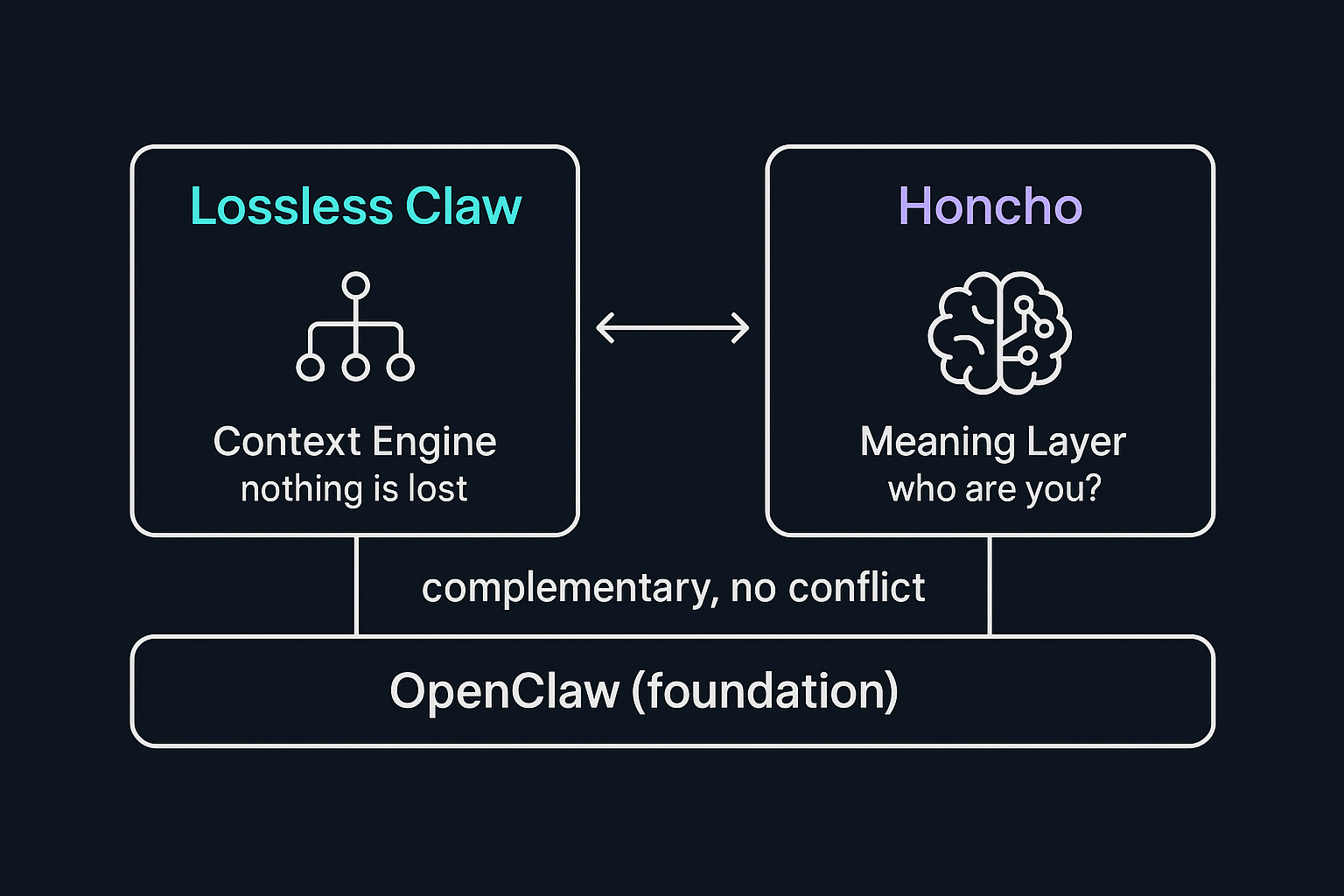

OpenClaw plugin that replaces the default compaction routine with a DAG-tree of rolling summaries. Original session transcripts are preserved in SQLite — nothing is ever thrown away.

Local hybrid search engine with BM25 + vector retrieval, LLM reranking, and query expansion. Uses ~2 GB GGUF models. Already integrated into OpenClaw — switch via config.

External memory service that reasons over conversations and builds "Peer Representations" — living models of each participant that evolve with every interaction. Injects summaries automatically via Peer Cards.

MCP server with 57 cognitive tools — believe, contradict, evolve, dream, goal_set, and more. Models biologically-inspired dream consolidation (NREM + REM phases). Built explicitly for personality development.